Career services leaders facing higher education budget cuts often try to evaluate programs through an impact lens — but when everything remaining has impact, impact can’t differentiate. This article introduces a two-level decision framework that replaces “impact” with two more honest questions: how much capacity does this program consume relative to its student reach, and is your institution willing to resource it — not just endorse it?

For guidance on navigating the hard conversations that follow — with staff and with leadership — see how to decide what to cut in career services without losing what matters.

It includes specific linguistic reframes that shift conversations from blame to structure, NACE data on career services staffing ratios, and a self-assessment exercise for mapping your program portfolio before your next planning conversation.

Your team is working harder than they were two years ago. Morale is dipping. Everything takes longer. But when you look at your program list, nothing has actually changed. The same services, the same goals, the same expectations.

The instinct is to blame time management, hiring gaps, or individual capacity. But the real issue is usually structural: when institutions cut staff without cutting reach expectations, the ratio of effort to output shifts invisibly. Programs that were manageable with five people become unsustainable with three — not because the program changed, but because the capacity around it did.

From the webinar: “Now I understand why my team’s feeling so stretched, even though our priorities haven’t really changed.” — Rebekah Paré

There’s a diagnostic tool that can help you see where the strain is actually concentrating. It takes about 15 minutes, and it will change how you think about your service portfolio.

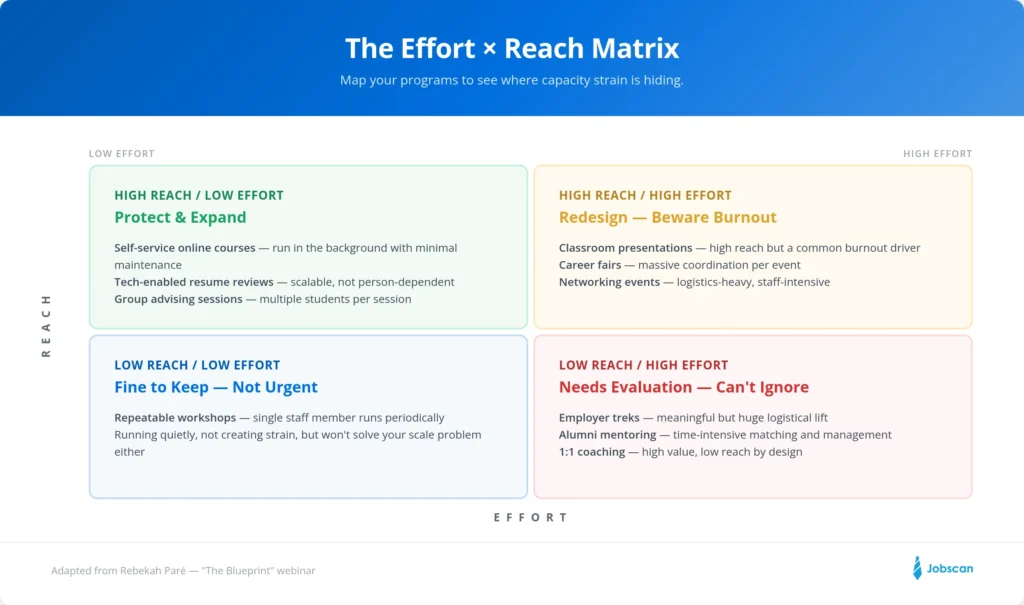

The Effort × Reach Diagnostic

The framework maps your programs across two dimensions:

Reach is how broadly access is distributed in a typical cycle — a semester, a year, whatever timeframe fits your center. This lens is specifically about breadth, because breadth is what institutions are increasingly asking for. Depth matters, but it’s not what this diagnostic measures.

Effort is the ongoing staff time, coordination, customization, and invisible work required to sustain something at its current quality. Low-effort programs are repeatable, not person-dependent, and survive staffing changes. High-effort programs require ongoing coordination, special handling, or depend on a few key people.

Key Insight: If someone left your team tomorrow, would this program bottom out? If the answer is yes, that’s a signal about effort dependency worth paying attention to.

When you map your programs across these two dimensions, four zones emerge — and where your work clusters tells you something important about your portfolio’s health.

What the Four Zones Look Like in Practice

High Reach, Low Effort — Protect These

These are your scalable wins. Once running, they serve students in the background with minimal ongoing staff involvement.

Examples: Self-service online courses, technology-enabled resume reviews (tools like Jobscan), group advising sessions. An online resume course takes significant effort to develop, but once it’s running, it can almost run itself with periodic maintenance. Group coaching reaches multiple students per session at a fraction of the per-student cost of 1:1 work.

From the webinar: “Once we’ve got it going, it can almost run itself with some maintenance.” — Rebekah Paré

High Reach, High Effort — Redesign Before Burnout

These programs reach a lot of students, which makes them valuable for exploring their career path. But they’re also the most common burnout drivers for staff.

Examples: Classroom presentations, career fairs, large networking events. Classroom presentations are a good example — high reach by design, but every session requires prep, travel, energy, and follow-up. Scale enough of these across a semester and they become the thing that consumes your team.

From the webinar: “Classroom presentations are high reach, but staff burnout.” — Kim, webinar attendee

The question for this zone isn’t whether to cut — it’s whether to simplify. Can the format be streamlined? Can technology handle part of the delivery? Can you reduce frequency without losing meaningful reach?

Low Reach, Low Effort — Fine to Keep

These don’t put much pressure on your resources, but they also won’t solve your scale problem. Repeatable workshops that a single staff member runs periodically fit here. They’re not urgent — they’re just running quietly.

Low Reach, High Effort — Where Hard Decisions Live

This is the zone that demands your attention. Not because these are bad programs — they’re often the ones your team is most proud of — but because they carry disproportionate capacity weight relative to how many students they serve.

Examples: Employer treks, alumni mentoring programs, internship scholarships, intensive 1:1 coaching.

Consider employer treks: meaningful, students love them, but a huge logistical lift. Identifying employers, coordinating travel, preparing students, managing the day — all for a relatively small group. Alumni mentoring is similar: a lot of time identifying alumni, preparing them, matching students, keeping the program moving.

From the webinar: “These are meaningful, they work, but they carry real capacity weight. Which matters when our staff is shrinking and our expectations are rising.” — Rebekah Paré

These programs are often beloved, sometimes donor-funded, and frequently tied to a specific person’s identity on the team. That’s precisely what makes them dangerous to capacity. And it’s why they’re the place where hard conversations can no longer be deferred.

Not Just What to Cut — What Can Move

The matrix isn’t only a cutting tool. Some programs can move quadrants. A high-effort activity can become low-effort with the right technology or format change. A low-reach program can scale with a different delivery model.

When you see a program stuck in the high-effort columns, the first question should be: is there a way to shift this, or does it need to stay where it is? Sometimes the answer is redesign, incorporating employability skills. Sometimes it’s letting go. But the matrix makes the conversation possible by showing you where the structural pressure actually lives.

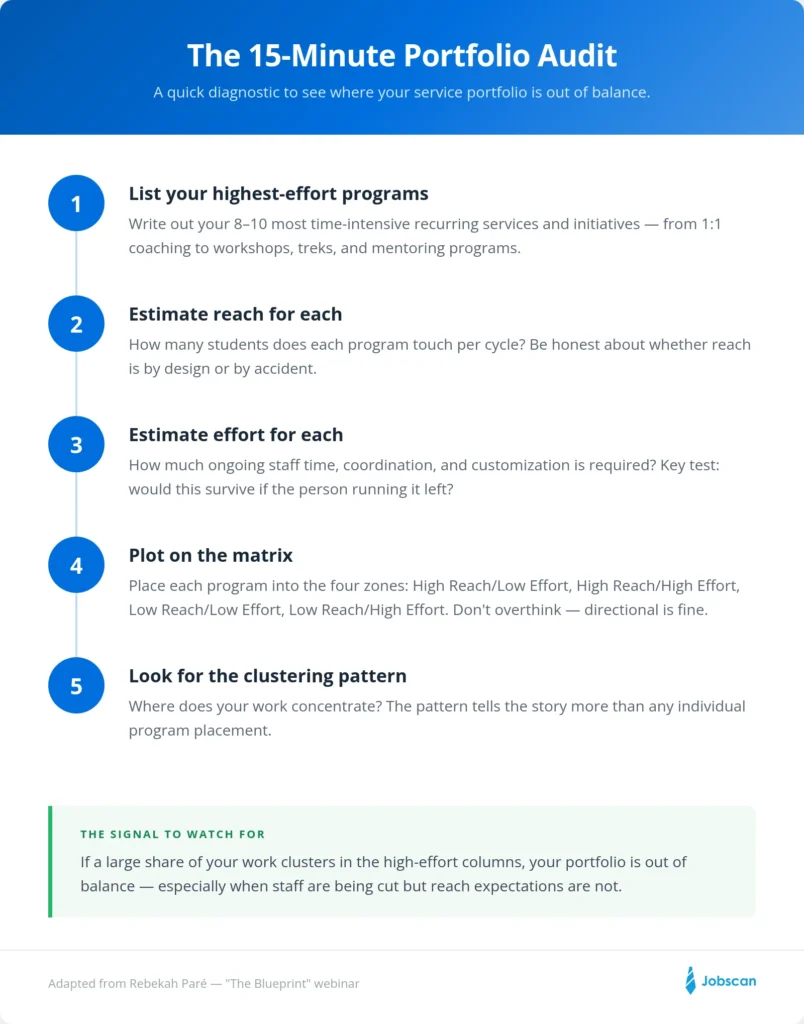

Run Your Own Audit This Week

This takes about 15 minutes. Grab a whiteboard, a spreadsheet, or a stack of sticky notes.

- List your 8–10 highest-time-investment programs — the recurring services and initiatives that fill your team’s weeks.

- Estimate reach for each — how many students does this touch per cycle? Be honest about whether reach is by design or by accident.

- Estimate effort for each — how much ongoing staff time, coordination, and customization does this require? Would it survive a staffing change?

- Plot them informally into the four zones. Don’t overthink placement — directional is fine.

- Look for the clustering pattern. If a large share of your work ends up in the high-effort columns, that’s your signal: the portfolio is out of balance. Not because your team isn’t working hard enough, but because the structure of your offerings doesn’t match your available capacity.

Pro Tip: If a large share of your work clusters in the high-effort columns, that’s a signal your portfolio is out of balance — especially when staff are being cut but reach expectations are not.

What Comes After the Diagnostic

The Effort × Reach audit shows you where the pressure is. But it doesn’t tell you what to do about it — which programs to protect, which to redesign, and which to let go. That requires a second lens: one that looks at whether your institution is truly willing to resource and defend each program, not just endorse it.

We built a complete guide that walks you through both levels of this framework — including the second decision matrix, a 5-step mapping process, and a Canva template you can use with your team in your next planning session to prepare for future careers.

Download the Strategic Decision Framework →

Adapted from Rebekah Paré’s presentation at “The Blueprint: From Strategic Vision to Team Execution,” a Jobscan webinar (2026).